Hate to be the burster of bubbles for anyone out there excited to unload your work on ChatGPT but PwC Australia has told its people that for now, playing around with AI should happen strictly off the clock.

Australian Financial Review reports that in this morning’s internal newsletter, PwCers were told not to feed client data into ChatGPT and to be wary of potentially conflicting output from this emerging technology.

PwC is encouraging staff to experiment with ChatGPT but has warned them against using any material created by the artificial intelligence chatbot in client work, as it explores ways to make use of such breakthrough technology in its operations.

The consulting group’s guidelines about the use of AI tools, sent out in the PwC internal newsletter on Monday morning, bans sharing firm or client data into such third-party tools and says the technology is prone to producing false information.

“PwC allows access to ChatGPT and we encourage our people to continue to experiment with ChatGPT personally, and to think about the role generative AI could play in our business, subject to important safeguards,” said Jacqui Visch, the firm’s chief digital and information officer.

“Our policies don’t allow our people to use ChatGPT for client usage pending quality standards that we apply to all technology innovation to ensure safeguards. We’re exploring more scalable options for accessing this service and working through the cybersecurity and legal considerations before we use it for business purposes.”

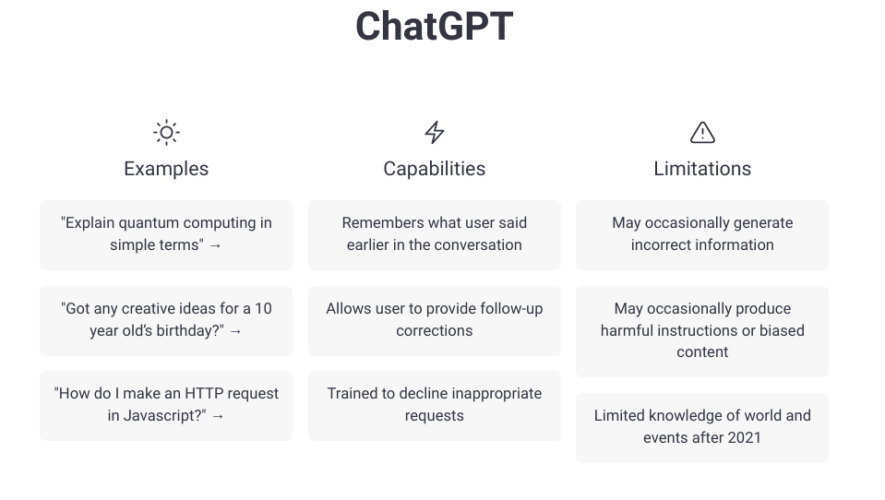

Beyond the obvious infosec risks, the message reminded staff that what ChatGPT spits out isn’t reliable and can vary wildly even when using the same or similar prompts. “They are stochastic in nature (meaning you could get a different answer every time you ask the same question), they can present inaccuracies as though they are facts, and they are prone to user error. They will require review and oversight and cross-validation of results before they can be relied upon for tasks that demand precision,” wrote Visch.

ChatGPT essentially warns you of this when first accessing the tool:

And when you get through that screen, the next one tells you not to share sensitive data:

The firm is actively looking into ways to use AI tools in a “consistent manner across the firm”, she said. She went on to say that any staff wishing to use AI output have to run that by the legal department or risk management first. It sounds like anyone who does so will, for now, be hit with an emphatic “NO.”

AFR goes on to say that while Deloitte too allows access to ChatGPT on work computers, KPMG “has taken a more conservative approach” and has blocked ChatGPT on firm PPE except for limited use by teams investigating its potential.

PwC warns staff against using ChatGPT for client work [AFR]